Web Form Data

Ingestion Pipeline

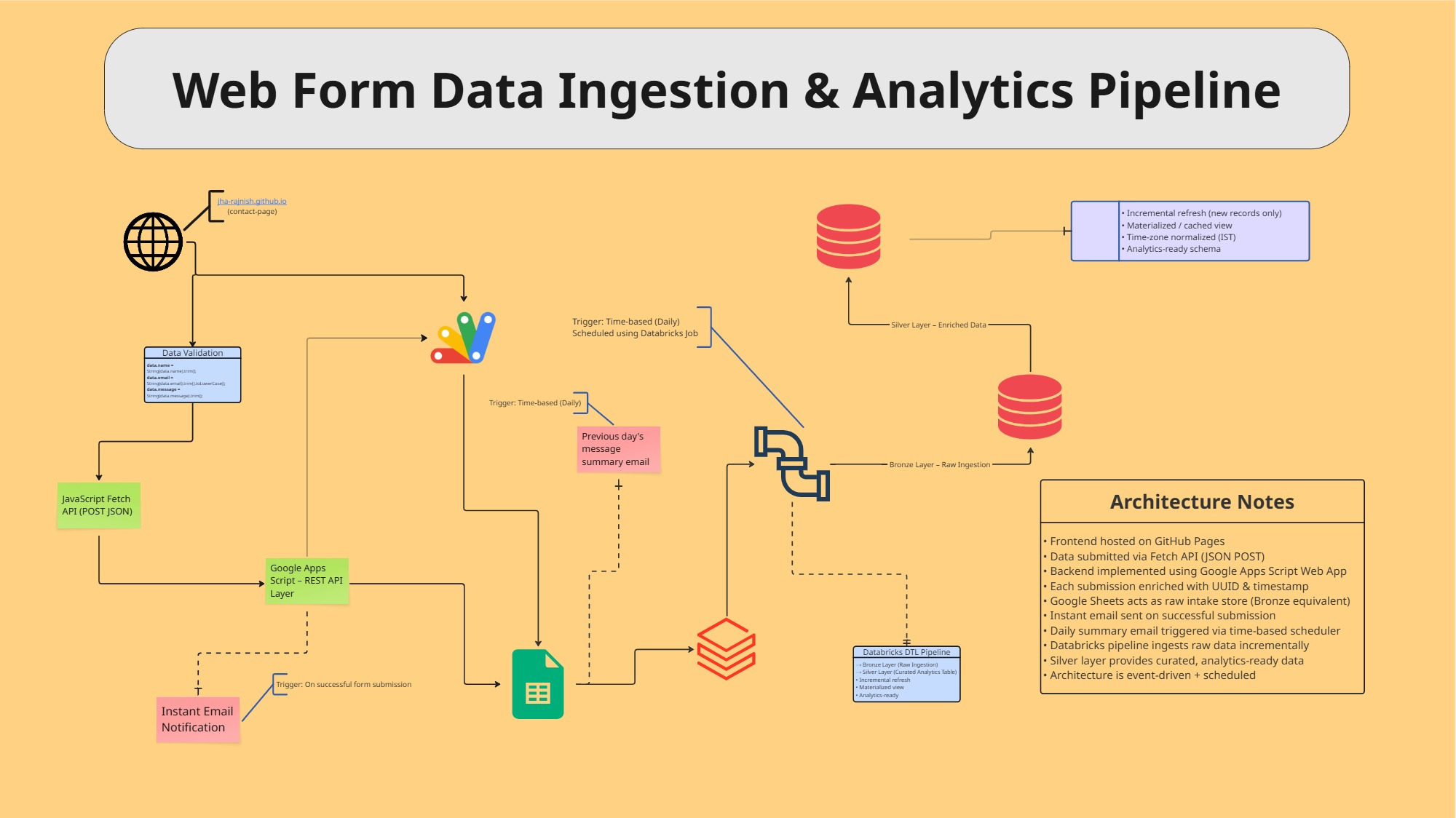

Event-driven, end-to-end web form data ingestion and analytics pipeline combining a serverless Google Apps Script backend, Google Sheets bronze layer, and Databricks incremental processing for analytics-ready datasets.

GAS Serverless API

2 Lakehouse Tiers

∞ Incremental Loads

DTL Pipeline

01 — Objective

Implement an end-to-end, event-driven web data ingestion and analytics

pipeline designed to capture, validate, store, and analyze form

submissions in a scalable and reliable manner — combining a GitHub

Pages frontend, Google Apps Script REST API, Google Sheets bronze

layer, and a Databricks DTL pipeline with an incrementally refreshed

silver layer for analytics-ready output.

02 — Architecture

System Overview

03 — Pipeline Architecture

Ingestion · Bronze · Silver

Ingestion

GitHub Pages frontend submits JSON via Fetch API. Google Apps

Script enriches each record with UUID & timestamp, triggers

instant email on receipt

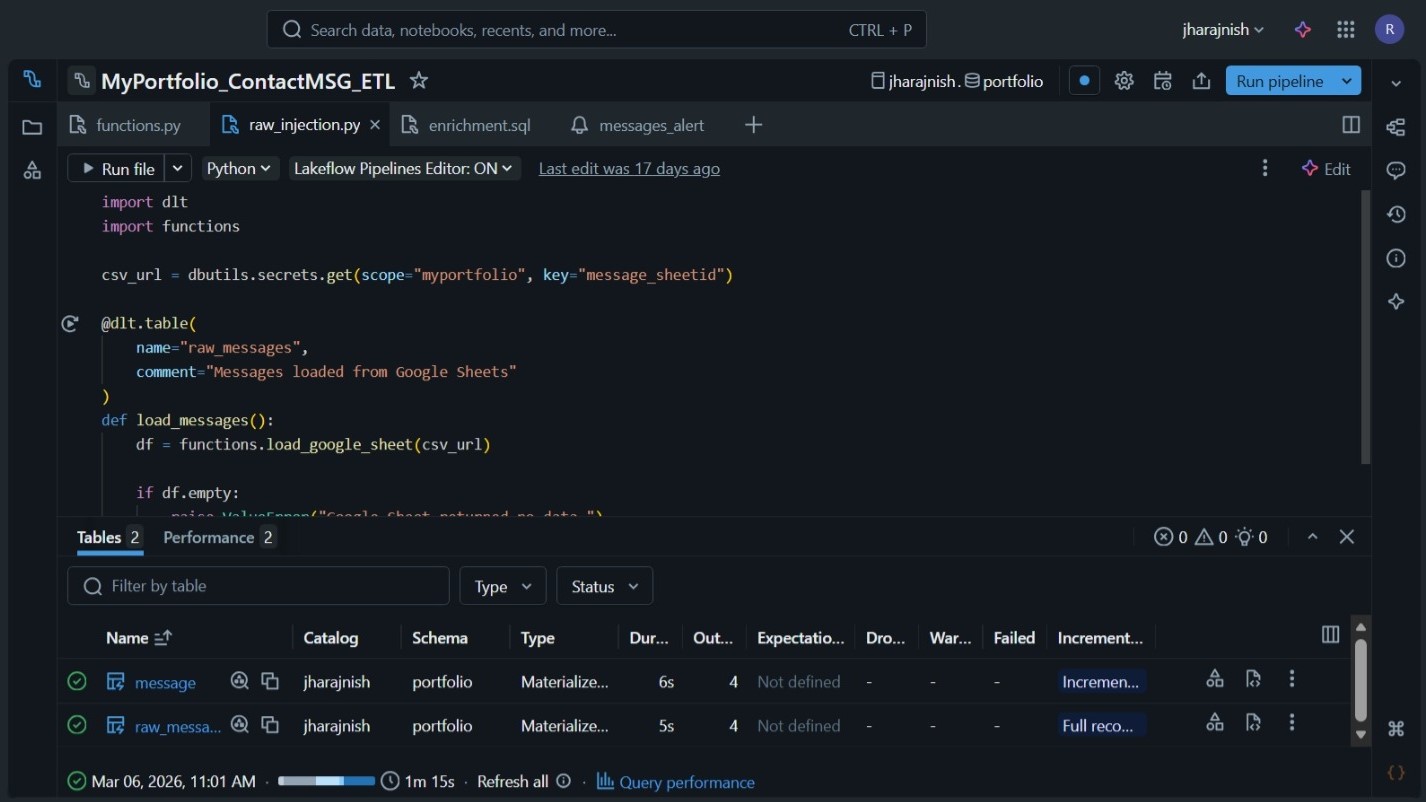

Bronze

Google Sheets acts as raw intake store — append-only records

ingested incrementally into Databricks via DTL pipeline

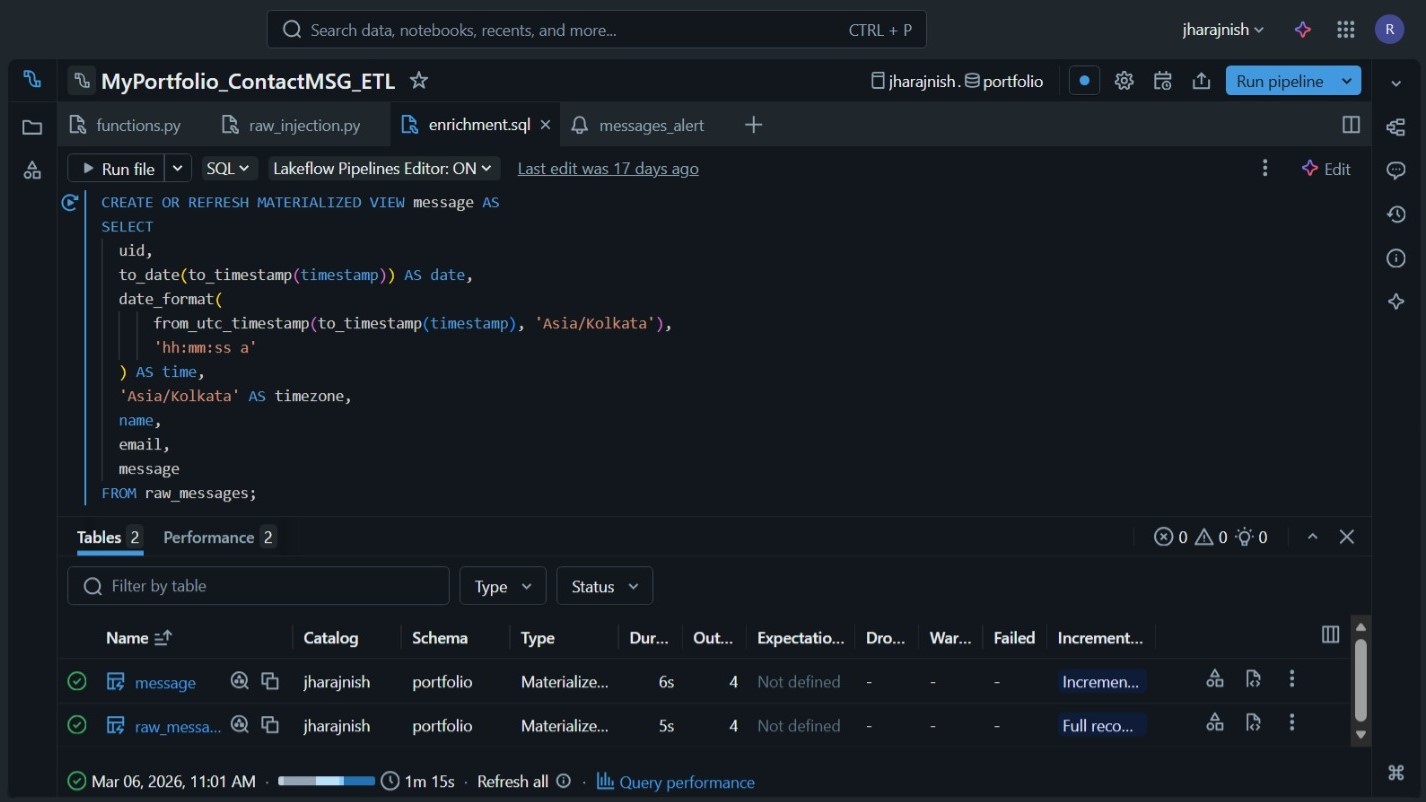

Silver

Incrementally refreshed materialized view applying cleansing,

normalisation & transformations — no reprocessing of

historical data

04 — Pipeline Highlights

What's Under the Hood

Frontend hosted on GitHub Pages with client-side validation

before submission

Data submitted via JavaScript Fetch API as JSON POST

request

Backend implemented using Google Apps Script Web App as a

serverless REST API

Each submission enriched with UUID & timestamp for

traceability

Google Sheets acts as raw intake store — lightweight Bronze layer

equivalent

Instant confirmation email triggered on successful

submission

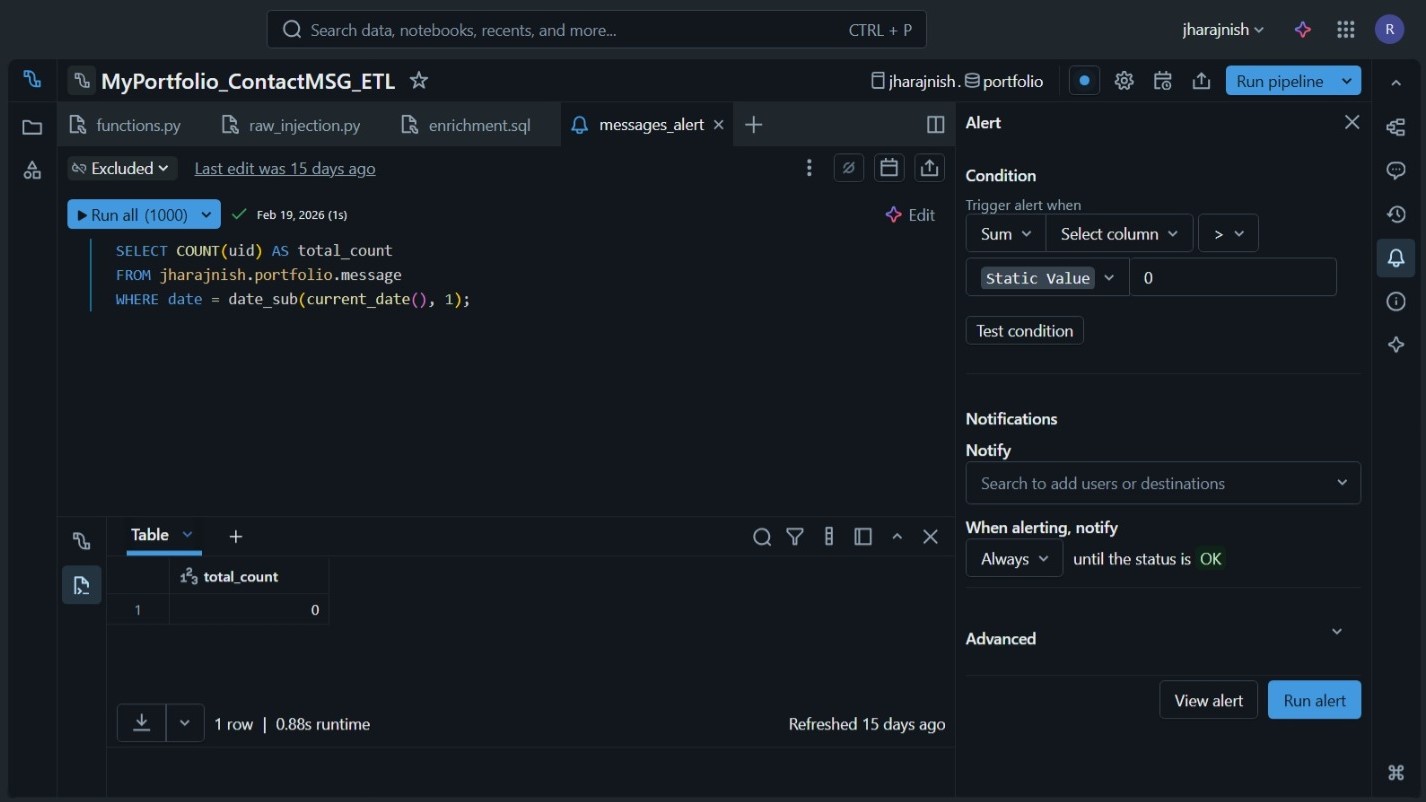

Daily summary email with previous day's metrics via time-based

scheduler

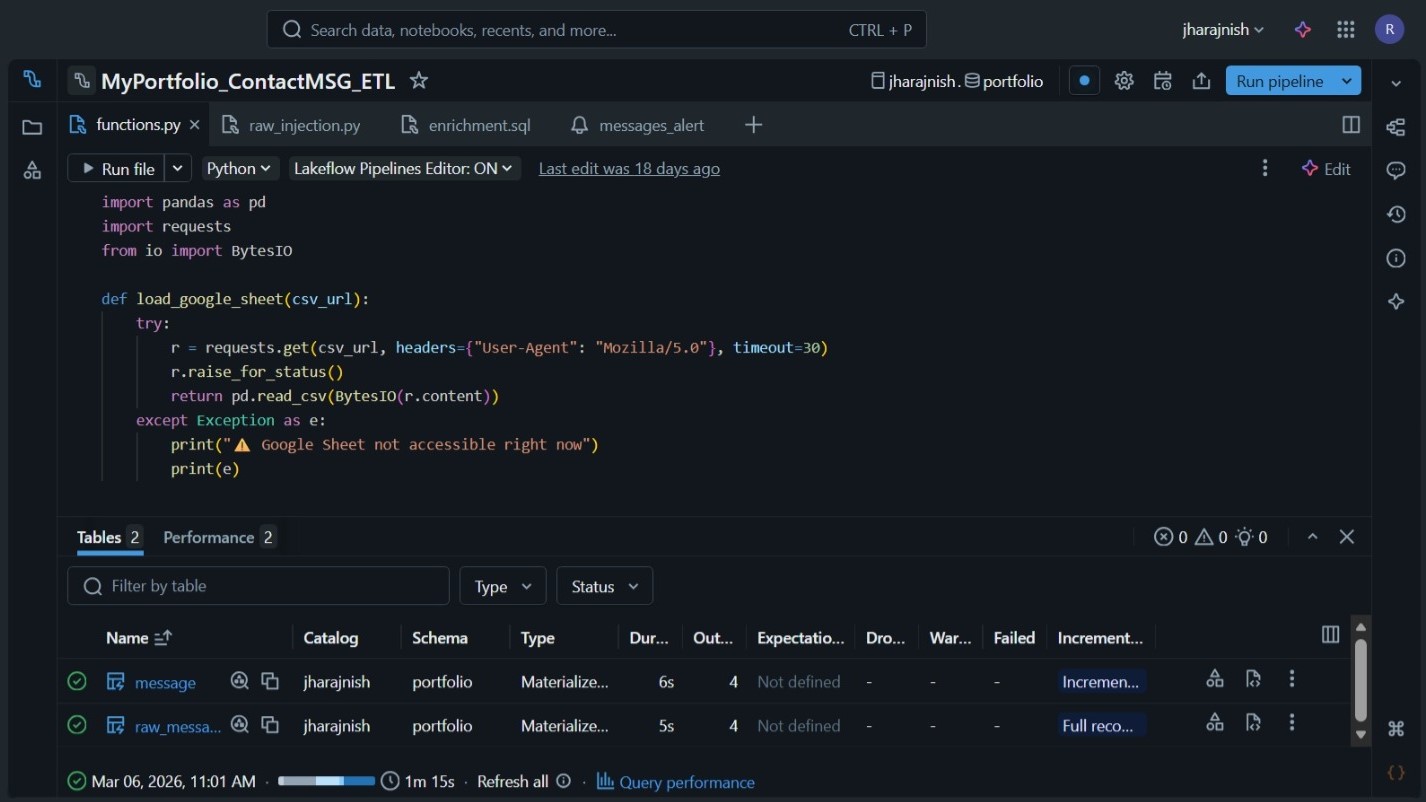

Databricks DTL pipeline ingests raw data incrementally from

Google Sheets

Silver layer as incrementally refreshed materialized view — no

historical reprocessing

Event-driven ingestion combined with scheduled processing for

cost-efficient architecture

05 — Software Toolkits

Built With